The government uses Claude, as well as AI technologies from Google and OpenAI, among others, in a secure framework provided by Palantir.

- The six-month phase-out period for Anthropic’s Claude shows how much the government needs it, according to Burry.

- The U.S. on Friday ordered defence contractors to stop working with Anthropic.

- Palantir, which provides AI services to government agencies by integrating with various models, is being closely watched by investors.

The U.S. government’s decision to grant a six-month phase-out period for Anthropic’s Claude AI, even after labeling it a supply-chain risk, highlights how strategically important the technology has become, prominent investor Michael Burry said.

According to the “Big Short” investor, the move suggests that relying solely on Palantir Technologies Inc., paired with AI models from alternative providers, is not seen as an adequate substitute.

“Removing Claude was a Trumpian thing to do. He and his people were offended. The 6-month phase out was the military saying uh, we need Claude for a minute here, and no, the Palantir wrapper with those other models alone is not enough,” Burry said on X on Sunday. “Shows the stickiness is Claude’s tech, not Palantir’s.”

The Donald Trump administration and Anthropic have been feuding for months over how the Pentagon can use its AI models. Trump on Friday ordered agencies to stop working with the company, and the Department of War designated it a security threat and risk to its supply chain. The designation means that all of the government’s vendors would have to stop using Anthropic’s technology.

Understanding Palantir Plus AI In Defence

The outcome marks a significant setback for Anthropic, and stock-market investors, including Burry, are focused on what it means for the public-company landscape, particularly for Palantir.

Burry disclosed a short position in November, questioning at the time its soaring, AI-hype-driven valuation. Since then, Burry has also targeted what he called ‘nefarious’ accounting practices at the firm and its CEO’s huge spend on a personal jet, and has forecast a sharp drop in the stock.

The U.S. government and military generally do not deploy general-purpose AI models (such as ChatGPT, Claude, Gemini, etc.) directly for sensitive tasks. Instead, they embed these models inside secure data platforms and frameworks such as Palantir’s products or accredited cloud environments.

Palantir’s platforms, like Gotham, Foundry, and AIP (AI Platform), serve as the substrate for AI integration. Claude is deployed inside Palantir via Amazon Web Services’ GovCloud platform. AI models from OpenAI and Meta also work within Palantir’s framework.

Investors are curious to see whether and to what extent Palantir’s business would be affected by Claude’s removal from federal work. Palantir, which earned nearly $2 billion in revenue from the U.S. government last year, is one of the most-watched AI stocks after an incredible surge over the last two years.

Retail’s View On PLTR

Retail investors are also curious because, despite blowout results last month, Palantir shares still trade about 35% below their November peak.

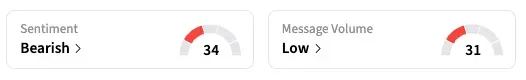

Stocktwits sentiment for PLTR remained in the ‘bearish’ zone as of late Sunday, unchanged from the start of the week.

“Anthropic is being shown the door by Hegseth. PLTR will be the main beneficiary,” said a retail user.

“$PLTR Claude and now OpenAI cannot operate in the highly confidential US military environment without Palantir's AI operating system. Period!” posted another.

Anthropic Out, OpenAI In?

Just after the government announced its decision for Claude, OpenAI announced that it had struck a deal allowing the Department of Defense to use its AI models in the department’s classified network.

Meanwhile, Claude has overtaken ChatGPT to become the number one free app on Apple’s App Store.

For updates and corrections, email newsroom[at]stocktwits[dot]com.<